Less filling?

The Imperative/Object vs. Functional/Declarative paradigm has confused many a beginning developer; even the seasoned developer writing this article. Here I attempt to shed some light.

Why FP? For starters, controlling object hierarchies and keeping track of object state is one of the most difficult challenges of OO design. Additionally, we live in a world of interactions, not models in a fixed state. FP aims to simplify those interactions by reducing the scope of what could possibly go wrong. FP aims to create fixed, 100%-predictable-at-runtime operations vs. the polymorphism and dynamic composition so often seen in OO.

Huh? 😕

Many an FP project was begun as a pure NodeJS project, only to fall back on TypeScript when the need for type-checking and compilation became too obvious to ignore. Why is this?

There seems to be a push and pull between the flexibility that JS offers (the kind of "super dynamism" inherent in the JS prototyping model) vs. the adherence to SOLID principals that typed modeling with pre-compilation provides. When implemented reasonably and practically, FP can greatly simplify a project or at least simplify some of its lambda-izeable code.

The Virtues of FP

- FP's versatility lies in its ability to abbreviate logical statements with lambda calculus, thereby shrinking the solution space and reducing cognitive load for the developer (for enthusiastic students of Calculus theorems, at least 😃)

- Also => x = > x.Happiness

The Virtues of OOP

- Since the release of Smalltalk, has seen widely successful iterations in Java and C#

- Its footprint is vast and its legacy rich (C# 12, .NET 6, Java 18)

- OO development is still the best way humans have to model entities and complex hierarchies; FP does not magically solve or remove the OO state-tracking problem (and actually makes debugging less transparent in many cases), it merely pushes it to another corner of the development space (the function implementations).

When Alan Kay coined the term “Object Oriented Programming” in the 1960s he had a background in biology and was attempting to make computer programs communicate the same way living cells do. Kay’s big idea was to have independent programs (cells) communicate by sending messages to each other. The state of the independent programs would never be shared with the outside world (encapsulation). Said Kay years later after OO had achieved market dominance, "I’m sorry that I long ago coined the term “objects” for this topic because it gets many people to focus on the lesser idea. The big idea is messaging.".

FP simplified: function interaction for behavior vs. object interaction for behavior.

Indeed.

In my experience with it, FP yielded successful programs, but never did I feel that I had intimate knowledge of how things were working outside of the modules I implemented or modified because the code was not inherently readable- to me, that is. When things go wrong, that can be a problem no matter how predictable the (wrong) results are. Certainly, with time and exposure, an OO developer can learn to reason about FP code equally if not more effectively than OO code; a lot of this debate is, at its core, a preference of style and mental model and has no right/wrong answer.

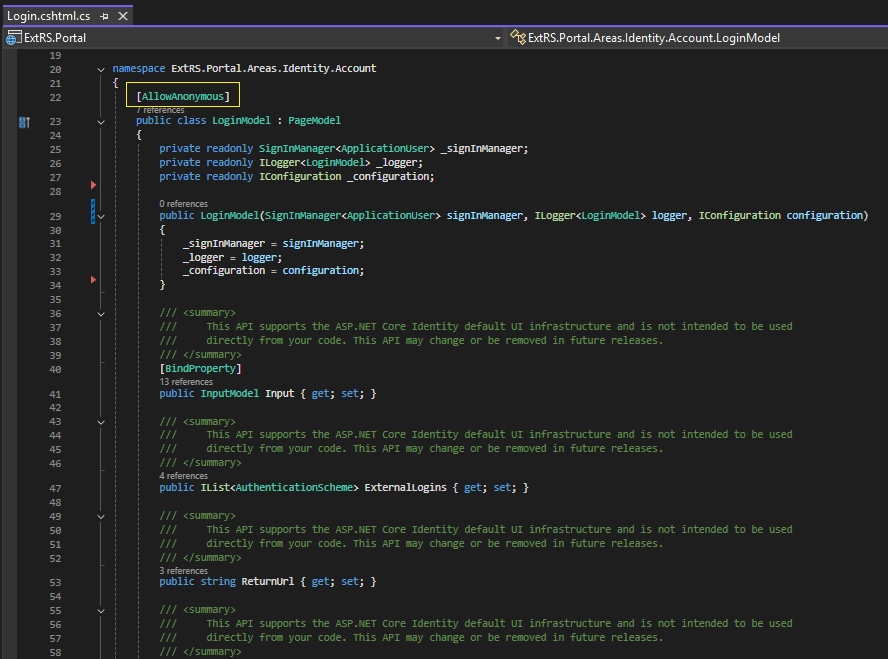

Oftentimes, the best decision is to use a little bit of both OO and FP. With the introduction of lambda statements in C#, .NET became a veritable proving ground for any software developers who knew they could greatly simplify .NET code by flattening all those convoluted and bug-prone foreach and for loops into simple lambda maps. Making certain properties readonly in an OO project will shine a (useful) bright light on just how much your code has spiraled out of control... Know?

For those who claim that FP will magically prevent all bugs, don't even try obfuscating the truth:

You cannot run away from or completely shield yourself from complexity.

Not by declaring, "we are an FP team now, as such all behavior must be function interactions, no mutable state anywhere!". That isn't what FP promises. Implemented practically however, FP is a far superior solution for certain use cases and certain development teams (think- very math-oriented software engineers).

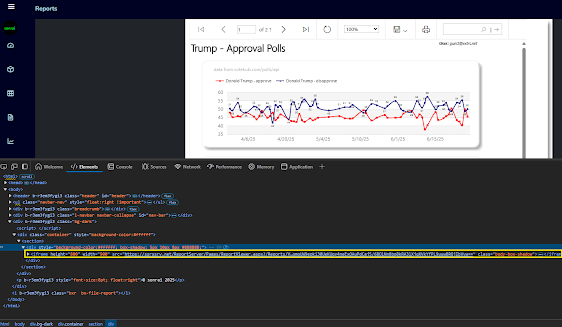

In this instance, the case for FP is clear; in .NET we can use FP within OO via Lamba fn()'s which achieves the simpler code on the right

FP Drawbacks and warnings

- Sometimes not feasible when trying to model application entities and their behaviors

- The same brevity that some developers love in FP can cause readability issues for others

- Often the tests for FP become so large that any gain in brevity is kind of negated

OO Drawbacks and warnings

- Type modeling can lead to an excessive number of types, increasing program size and complexity

- Bugs related to state mutations as a result of function/object side-effects

- More problem space for things to go wrong

More than any of the three issues above, the biggest problem I have with functional programming (in the Node ecosystem at least) is that the entire supply chain is open source and that can lead to neglected, abandoned, sabotaged or broken projects without warning. Love or Hate MS, they make sure (some) bugs are fixed and libraries are maintained.

When a NodeJS package/module that you depend on becomes obsolete or is compromised it forces teams to find an alternative and in the worst cases- entirely rebuild functionality with completely new dependencies.

And related to this, there is a bit too much over-distribution/splintering of source code going on with many (most?) FP Node projects. 20 dependencies in a project, sure. But I've worked on Node projects, that only involved a few functions, which relied on hundreds of NPM package dependencies, many of which were several versions out of date (and couldn't be updated to current, because, well y'know, it's a breaking Node package update! Weeeeee... 😃 (Ç'est la vie nous vivons))

😆

On the flipside, there is something immensely liberating about having not only a completely commercial-free OS like Linux, but also, free IDEs and a worldwide bazaar of NPM packages that fulfill virtually any development requirement that one might ever encounter.

FP style emphasizes ensuring your code has "high signal-to-noise ratio"; when things break, we want to know where it hurts and why, immediately. To paraphrase Kent Beck in his book on unit testing, stack traces of poorly written integration tests and production failures often tell us there is a problem in our application, "but, where oh where?".

If we keep our abstractions tightly wound and limited (and oh boy does FP do that in spades), we will immediately become aware of any issue in our program and know exactly what line or lines of code are responsible.

The "core" of FP is in its ability to do several things at once (parallelism- prime domain for large list processing)

Summary

FP is only going to gain a greater and greater share of the software development world as more and more mathematically inclined developers join the ranks of the IT industry. To be sure: Java and .NET have a lot of great stuff on Maven and Nuget, respectively.

More than anything else, FP is a mindset, or a "mental model" of how program messaging is defined.

You can even collaborate on open-source project in .NET and Java and even C++ in in this day and age of 2022. In fact, MS Documentation and a large portion of the MS code library is now open source.

However, no open-source communities are as active as the ones you will find for FP (Lisp, Go, NodeJS, Haskell, Clojure, F#). Whatever Lisp or Haskell may lack in worldwide development footprint they make up for in developer enthusiasm and evangelizing the FP mindset and how it can lead to more concise and more "easier to test and reason about" software application code.

More than anything else, FP is a mindset, or a "mental model" of how program messaging is defined.

More specifically, it is the mindset of passing around functions-as-arguments-to-other-functions (like recursion, but for everything in the program) instead of object composition, state tracking and function arguments. This not only eliminates the need for state tracking and therefore much of the problem space, but it also opens up the application code to mathematical proof modeling of the application behavior requirements- a huge productivity gain if your development team is a heavily math-oriented bunch.

OO vs. FP

Lisp is short for "list processor". Naturally, programs involving list manipulation and uniform message processing are a very good candidate for FP code which is excellent at doing very specific tasks that involve inputs and outputs and not much else- at huge scale.

Like most software development trends, FP is not new (Lisp was developed at MIT in the late 1950s), but it is experiencing a renaissance thanks to developers rediscovering and extolling its virtues with the larger (and largely OO since 1990s) software development community.

A little bit of FP can go a long way and can clear away complexity in code like so much brushfire. But don't go using it to look for problems in existing OO code that don't exist.

FP Quotes

"You can use OO and FP at different granularity. Use OO modeling to find the right places in your application to put boundaries. Use FP techniques within those boundaries" -OOP vs FP

"OOP is not natural for the human brain, our thought process is centered around “doing” things — go for a walk, talk to a friend, eat pizza. Our brains have evolved to do things, not to organize the world into complex hierarchies of abstract objects." -FP essay

OOP does not have enough constraints in place that would prevent bad programmers from doing too much damage. " -Ilya Suzdalnitzski

"Encapsulation is the trojan horse of OOP. It is actually a glorified global mutable state" -OO, the trillion dollar disaster

References:

https://betterprogramming.pub/object-oriented-programming-the-trillion-dollar-disaster-92a4b666c7c7

https://dev.to/bhaveshdaswani93/oop-vs-fp-with-javascript-39jf

https://betterprogramming.pub/object-oriented-programming-the-trillion-dollar-disaster-92a4b666c7c7

https://www.lihaoyi.com/post/WhatsFunctionalProgrammingAllAbout.html#the-core-of-functional-programming