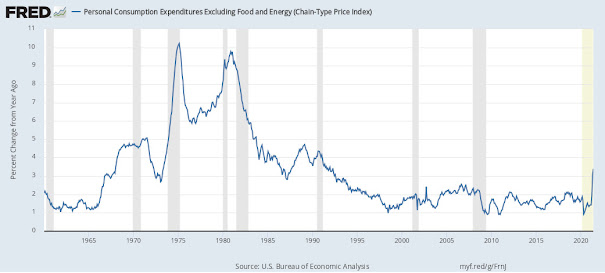

Inflation 2022

FP Lite

The Imperative/Object vs. Functional/Declarative paradigm has confused many a beginning developer; even the seasoned developer writing this article. Here I attempt to shed some light.

Why FP? For starters, controlling object hierarchies and keeping track of object state is one of the most difficult challenges of OO design. Additionally, we live in a world of interactions, not models in a fixed state. FP aims to simplify those interactions by reducing the scope of what could possibly go wrong. FP aims to create fixed, 100%-predictable-at-runtime operations vs. the polymorphism and dynamic composition so often seen in OO.

Many an FP project was begun as a pure NodeJS project, only to fall back on TypeScript when the need for type-checking and compilation became too obvious to ignore. Why is this?

There seems to be a push and pull between the flexibility that JS offers (the kind of "super dynamism" inherent in the JS prototyping model) vs. the adherence to SOLID principals that typed modeling with pre-compilation provides. When implemented reasonably and practically, FP can greatly simplify a project or at least simplify some of its lambda-izeable code.

The Virtues of FP

- FP's versatility lies in its ability to abbreviate logical statements with lambda calculus, thereby shrinking the solution space and reducing cognitive load for the developer (for enthusiastic students of Calculus theorems, at least 😃)

- Also => x = > x.Happiness

The Virtues of OOP

- Since the release of Smalltalk, has seen widely successful iterations in Java and C#

- Its footprint is vast and its legacy rich (C# 12, .NET 6, Java 18)

- OO development is still the best way humans have to model entities and complex hierarchies; FP does not magically solve or remove the OO state-tracking problem (and actually makes debugging less transparent in many cases), it merely pushes it to another corner of the development space (the function implementations).

When Alan Kay coined the term “Object Oriented Programming” in the 1960s he had a background in biology and was attempting to make computer programs communicate the same way living cells do. Kay’s big idea was to have independent programs (cells) communicate by sending messages to each other. The state of the independent programs would never be shared with the outside world (encapsulation). Said Kay years later after OO had achieved market dominance, "I’m sorry that I long ago coined the term “objects” for this topic because it gets many people to focus on the lesser idea. The big idea is messaging.".

FP simplified: function interaction for behavior vs. object interaction for behavior.

Indeed.

In my experience with it, FP yielded successful programs, but never did I feel that I had intimate knowledge of how things were working outside of the modules I implemented or modified because the code was not inherently readable- to me, that is. When things go wrong, that can be a problem no matter how predictable the (wrong) results are. Certainly, with time and exposure, an OO developer can learn to reason about FP code equally if not more effectively than OO code; a lot of this debate is, at its core, a preference of style and mental model and has no right/wrong answer.

Oftentimes, the best decision is to use a little bit of both OO and FP. With the introduction of lambda statements in C#, .NET became a veritable proving ground for any software developers who knew they could greatly simplify .NET code by flattening all those convoluted and bug-prone foreach and for loops into simple lambda maps. Making certain properties readonly in an OO project will shine a (useful) bright light on just how much your code has spiraled out of control... Know?

For those who claim that FP will magically prevent all bugs, don't even try obfuscating the truth:

You cannot run away from or completely shield yourself from complexity.

Not by declaring, "we are an FP team now, as such all behavior must be function interactions, no mutable state anywhere!". That isn't what FP promises. Implemented practically however, FP is a far superior solution for certain use cases and certain development teams (think- very math-oriented software engineers).

FP Drawbacks and warnings

- Sometimes not feasible when trying to model application entities and their behaviors

- The same brevity that some developers love in FP can cause readability issues for others

- Often the tests for FP become so large that any gain in brevity is kind of negated

OO Drawbacks and warnings

- Type modeling can lead to an excessive number of types, increasing program size and complexity

- Bugs related to state mutations as a result of function/object side-effects

- More problem space for things to go wrong

More than any of the three issues above, the biggest problem I have with functional programming (in the Node ecosystem at least) is that the entire supply chain is open source and that can lead to neglected, abandoned, sabotaged or broken projects without warning. Love or Hate MS, they make sure (some) bugs are fixed and libraries are maintained.

When a NodeJS package/module that you depend on becomes obsolete or is compromised it forces teams to find an alternative and in the worst cases- entirely rebuild functionality with completely new dependencies.

And related to this, there is a bit too much over-distribution/splintering of source code going on with many (most?) FP Node projects. 20 dependencies in a project, sure. But I've worked on Node projects, that only involved a few functions, which relied on hundreds of NPM package dependencies, many of which were several versions out of date (and couldn't be updated to current, because, well y'know, it's a breaking Node package update! Weeeeee... 😃 (Ç'est la vie nous vivons))

On the flipside, there is something immensely liberating about having not only a completely commercial-free OS like Linux, but also, free IDEs and a worldwide bazaar of NPM packages that fulfill virtually any development requirement that one might ever encounter.

FP style emphasizes ensuring your code has "high signal-to-noise ratio"; when things break, we want to know where it hurts and why, immediately. To paraphrase Kent Beck in his book on unit testing, stack traces of poorly written integration tests and production failures often tell us there is a problem in our application, "but, where oh where?".

If we keep our abstractions tightly wound and limited (and oh boy does FP do that in spades), we will immediately become aware of any issue in our program and know exactly what line or lines of code are responsible.

Summary

FP is only going to gain a greater and greater share of the software development world as more and more mathematically inclined developers join the ranks of the IT industry. To be sure: Java and .NET have a lot of great stuff on Maven and Nuget, respectively.

More than anything else, FP is a mindset, or a "mental model" of how program messaging is defined.

You can even collaborate on open-source project in .NET and Java and even C++ in in this day and age of 2022. In fact, MS Documentation and a large portion of the MS code library is now open source.

However, no open-source communities are as active as the ones you will find for FP (Lisp, Go, NodeJS, Haskell, Clojure, F#). Whatever Lisp or Haskell may lack in worldwide development footprint they make up for in developer enthusiasm and evangelizing the FP mindset and how it can lead to more concise and more "easier to test and reason about" software application code.

More than anything else, FP is a mindset, or a "mental model" of how program messaging is defined.

More specifically, it is the mindset of passing around functions-as-arguments-to-other-functions (like recursion, but for everything in the program) instead of object composition, state tracking and function arguments. This not only eliminates the need for state tracking and therefore much of the problem space, but it also opens up the application code to mathematical proof modeling of the application behavior requirements- a huge productivity gain if your development team is a heavily math-oriented bunch.

Lisp is short for "list processor". Naturally, programs involving list manipulation and uniform message processing are a very good candidate for FP code which is excellent at doing very specific tasks that involve inputs and outputs and not much else- at huge scale.

Like most software development trends, FP is not new (Lisp was developed at MIT in the late 1950s), but it is experiencing a renaissance thanks to developers rediscovering and extolling its virtues with the larger (and largely OO since 1990s) software development community.

A little bit of FP can go a long way and can clear away complexity in code like so much brushfire. But don't go using it to look for problems in existing OO code that don't exist.

FP Quotes

"You can use OO and FP at different granularity. Use OO modeling to find the right places in your application to put boundaries. Use FP techniques within those boundaries" -OOP vs FP

"OOP is not natural for the human brain, our thought process is centered around “doing” things — go for a walk, talk to a friend, eat pizza. Our brains have evolved to do things, not to organize the world into complex hierarchies of abstract objects." -FP essay

OOP does not have enough constraints in place that would prevent bad programmers from doing too much damage. " -Ilya Suzdalnitzski

"Encapsulation is the trojan horse of OOP. It is actually a glorified global mutable state" -OO, the trillion dollar disaster

References:

https://betterprogramming.pub/object-oriented-programming-the-trillion-dollar-disaster-92a4b666c7c7

https://dev.to/bhaveshdaswani93/oop-vs-fp-with-javascript-39jf

https://betterprogramming.pub/object-oriented-programming-the-trillion-dollar-disaster-92a4b666c7c7

What is the Fed?

The Fed

The Federal Reserve, also known as the "Fed", "lender of last resort" and a "bank to other banks" is the central bank of the United States that determines U.S. monetary policy. This means that it controls the national money supply which enables it to control national interest rates. This is used to keep two major indicators in check- unemployment and inflation. The Fed also provides short term loans (a kind of "stimulus") to banks in times of stress.

History

For a long time, people feared central banks had too much power in too few hands. However, in 1907, the Knickerbocker Trust Co. went bankrupt which led to a run on the banks and the banks didn't have sufficient cash reserves to give customers their requested withdrawals. To quell the panic, J.P. Morgan and other private wealthy American individuals made loans to the banks to get past the crisis. It was at this point the need for a central banking authority was made clear to America.

What the Federal Reserve does

The United States developed the Fed as a way to keep the economy healthy and provide reserves when necessary to prevent panic. It is a tool, most notably in its control of the federal funds rate.

The Fed controls this "root" lending rate (or the rate which the Fed lends to banks) and this directly influences the rate at which banks lend to each other and ultimately, the rate trickles down to companies and individuals. This is what is referred to as "the Fed changing the interest rate".

- Higher rates to cool a hot economy lead to more saving, less spending- a contraction of the money supply. Prices fall, lowered inflation.

- Lower rates to stimulate a flagging economy lead to more spending, borrowing and investing- an expansion of the money supply. Prices rise, heightened inflation.

The Fed raises interest rates to check inflation and lowers rates to check stagflation. The Fed is essentially designed to keep money flowing and prevent any major disruptions in our economy. The goal is to keep the U.S. economy healthy.

To match its target, the Fed performs "open market operations" which traditionally is the buying and selling of short-term U.S. government securities. To fight more recent financial straights however, the Fed has resorted to buying and selling more long-term, non-governmental securities.

The Fed essentially performs a balancing act to ensure that there is always enough buyers and sellers or put another way, they ensure that too many dollars in circulation for too few people (specifically people spending cash/money) doesn't lead to runaway inflation and that too few dollars in too many hands doesn't lead to Recession or a tightening of overall lending that causes the economy stagnate or crash.

Fed criticism

The Infamous Fed Chairman Ben Bernanke line during the 2008 Financial Crisis "using a computer to mark up the size of the account" 👀 is not a typo or misquote. It is however, very misinterpreted by many. In normal times the Fed doesn't need to literally expand the money supply very often by printing dollars- it merely purchases securities with dollars to put those dollars back into circulation.

But when it is determined that the Fed doesn't have enough dollars to buy the securities required to stabilize the banking system it has to (heaven forbid) create dollars to match the need for lending during a lending freeze as was seen in the Financial Crisis of 2008, 2009 and Spring of 2020 when COVID-19 first ravaged the American economy. If you think about it rationally, the United States usually increases overall productivity year-over-year. With this increase in productivity, and increase in securities valuations, you need the physical dollars (or other tangible liquid assets like precious metals) behind the increased productivity and valuations otherwise when there is an adverse event and people go running to withdrawal money and liquidate their securities, there will not be enough money.

Printing dollars is necessary because our economic systems, and specifically the "cash paper/note" is man-made. And it cannot expand without human action (unless we put the economic into some kind of smart contract, but I'd advise against that).

If you expand the overall wealth in the U.S. by 3% or 5%, should the money supply not also expand to reflect this expansion?

If it doesn't, then $1 in current USD value would probably be worth about $3,378 dollars. It makes small transactions impractical. And I suppose we could instead print more "coins" but coins are more expensive to make and they are heavier.

The need for the Fed

There will never be enough money to autopilot the U.S. economy or obviate the need for the Fed and its independency. There will always be a need to do the balancing act of adjusting interest rates, the printing of money to match productivity and lending needs and the extreme rescue measure of printing money to create extremely large loans in order to keep key so-called "too big too fail" institutions solvent.

And that is to be expected. All of this is due to human nature and the fear instinct. When things go really bad (usually due to lack of regulatory oversight and/or financial fraud), people are going to run to the bank for cash and attempt to liquidate securities. And when peoples' and companies' bank and IRA balances are tied to institutions that evaporate overnight, the FDIC can't help beyond $100,000 per account. For companies and individuals with very high balances, you cannot expect the bank to have all the cash on hand to match everyone's balance (to say nothing of the ability to liquidate any of the myriad securities banks now offer to customers).

Banks are in the lending business, not the saving business. And there is a general expectation that there won't be a run on the bank and that all customers will not run to liquidate long-term assets all at once. So when these kinds of things happen (or when things start to tip in that direction)- the Fed steps in to provide everyone their "tomorrow money", today.

This act and the act of controlling the interest rates lever may not look very fair but it is necessary to rescue the economy in times of a major adverse event and to counter economic booms and busts and limit inflation.

When the next financial crash occurs, I'm not counting on Elon Musk and Bill Gates to be as generous as J.P. Morgan.

References:

https://www.youtube.com/watch?v=M7nj2X-yl_U

https://www.youtube.com/watch?v=qoUmSer2IxA

https://www.nytimes.com/2020/03/21/opinion/-coronavirus-stimulus-trillion.html

Using Azure Key Vault Secrets in ASP.NET Core

This is, in my opinion, one of the coolest features of Azure. Azure Key Vault is a space in Azure where you can add certificates and keys for strings and cryptographic keys that you want to keep safe and don't want inside source control, etc.

I've worked with the process for managing keys in AWS and in my experience (each usage/implementaiton is different), AWS Secrets is a slightly less simple process. (meaning it is pretty simple too, but I'm partial to Azure).

To enable storing Secrets in Azure, you first create an Azure Key Vault in your Azure account. Then you add keys (for instance the clientID and secretKey for an API your apps use or an artifact repository URI or database connection strings, etc.).

Once the keys are created, you configure Azure KeyVault for your application in appSettings as such:

.ConfigureAppConfiguration((context, config) =>

{

var azureServiceTokenProvider = new AzureServiceTokenProvider();

var keyVaultURI = "https://myvault.vault.azure.net/";

var keyVaultClient = new KeyVaultClient(new KeyVaultClient.AuthenticationCallback(azureServiceTokenProvider.KeyVaultTokenCallback));

cfg.AddAzureKeyVault(keyVaultURI, new DefaultKeyVaultSecretManager());

}); And once wired up, you can refer to your keys from that app- both on-prem and in the cloud (it uses SSL for the transfer) just as you would reference an appettings value through an IConfiguration object a la:

val keyVal = _configuration["mySuperSecretKeyInAzureKeyVault"];

Reference: https://azure.microsoft.com/en-us/services/key-vault/

Localization in ASP.NET Core using Resource (.resx) files

Localization in ASP.NET Core works much the same way as it did in legacy ASP.NET

You need only create .resx files with your translations reference them with an instance of IStringLocalizer<YourControllerName> that you can add to your controller method that contains the model that will use and present the translations to the UI.

services.Configure<RequestLocalizationOptions>(options =>

{

options.SetDefaultCulture("en-US");

options.AddSupportedCultures("en-US", "es-CO", "fr-CA");

options.AddSupportedUICultures("en-US", "es-CO", "fr-CA");

});

How does ACH work?

ACH is an acronym for Automated Clearinghouse. ACH is essentially an electronic way of performing increments (credits) and decrements (debits) to financial accounts.

ACH can process any transaction so long as the bank or financial institutions in the transaction are ACH compliant. There exists a nationwide ACH network for the settlement of transactions betwen financial institutions. Beginning in the early 70's ACH emerged as the preffered payment method for transfering money- especially routine, scheduled payments- between banks without the need to be physically present,

During the 1970's in America, ACH organizations were created such as the long-standing NACHA, or National Clearinghouse Association. ACH files have standard "entry class codes" and "transaction codes" with standarized formatting for ACH transaction messaging. ACH files contain information to describe the ACH transaction such as:

- Debit or credit type of transaction type (+/-)

- Whether transaction is posting to deposit account, loan account or a corporate GL

- Customer Name

- Account

- Routing

- Customer ID

- Next day or same day settlement

- Settlement date

"Prenotifications" are test ACH transactions using zero dollar transactions to validate source or destination account numbers.

ACH operators are a centralized clearninghouses that settle the transaactions for paritipating financial institutions. The two ACH operators are the federal reserve bank and the electronic payment system.

Transaction types that do NOT use ACH and why:

Wire transfer - due to differing regulations, higher dollar amounts

Debit card - requires proprietary rules and a network

Credit card - because they are a line of credit only

ATM - card based with pin (differing rules)

ACH's relationship with cryptocurrency transfers is interesting (apparently wire transfers of monies in/out is far more expensive than ACH). See this recent NACHA article: https://www.nacha.org/news/crypto-and-ach-and-coming-pair

ACH is a vital component in the payments ecosystem, and though it is uniquely American, other countries have similiar clearinghouses with different transaction file/message formats. Knowing the ACH background and basic file structure is useful as you may need to understand how it works some day at some job.

Regardless of what happens with cryptocurrencies and the inevitable USD coin which will be backed by the full faith and credit of the US government, ACH technology- and technology built on top of it- is here to stay for a long, long time.

Code Readability vs. Code Golf

Code Golf: https://code-golf.io, https://codegolf.stackexchange.com (have fun, you can learn lots)

ASM, clear for some developers; not most:

Even complex languages can be simplified somewhat by using logical naming conventions to identify what the entities are and what operations are doing.

Clear and consistent naming conventions of all members within the code of an application go a long way for making your code open to collaboration and/or extension by others. In much the same way people prefer different kinds of poetry, we developers prefer certain programming languages or styles (.NET vs. Java vs. NodeJS vs Python vs. C and C++; Message Queues vs. RDBMS; OO vs. FP) more or less than others. We should however, as far as possible, attempt to make our code clearly formatted and named for anyone to easily interpret. We should be as clear and consistent as possible and only comment when explaining the behavior in variable or function names alone won't suffice.

o(char* x, char* y)

{

char* f=

"Old MacDonald had a farm, E-I-E-I-O,\n"

"And on that farm he had a %s%.13s"

"With a %s %s here and a %s %s there,\n"

"Here a %s, there a %s, everywhere a %s %s,\n"

"%.35s!\n";

printf(f,x,f+24,y,y,y,y,y,y,y,y,f);

}

A Timeless Book (by Jerry Fitzpatrick)

TL; DR;

This book gets right to the point of the common aspects required for building great software and is too valuable for any software development professional not to read; and it contains just 172 pages! 😃

Read this book.

Plan Before Implementing

Proper and properly named abstractions to match problem domain and purpose. Thoroughly documented and well-understood behavioral interactions among all accessible components and at minimum a solid high level understanding of all dependencies; know when not to re-invent the wheel and instead utilize a proven 3rd party or open source tool.

Keep it Small

YAGNI ("You ain't gonna need it") is a very real thing. Development teams should focus on working prototypes that can be ironed out for production vs. forever "ideal" implementations that never make it to an actual user's screen.

Write Clearly

Treat a rough draft as a rough draft by encouraging code reviews and frequent minor refactoring to achieve code clarity for the next developers who will inevitably read (and need to understand in order to change or extend) your logic in the future.

Prevent Bugs

Meticulously control scope and member access and understand all ramifications of behavioral code points within the application. Every area of variation/mutation/state change should be covered by tests that are both named and implemented to clearly demonstrate the function/class/behavior being tested.

All tests are important for confidence/assurance in ongoing development but TDD (writing tests before the implementation a la Kent Beck) is not a replacement for good, cohesive design. That being said, I think the TDD backwards approach can help start great design discussion among developers when the design is somewhat unclear (tests, particularly broken tests will expose the innards that most warrant discussing).

Make the Program Robust

Utilize agreed-upon code formatting and pattern implementation standards and non-antagonistic code reviews to ensure these standards are being followed.

Utilize information hiding and useful categorization and encapsulation that extends but does not lend itself to potential client issues.

Do not try/catch everything and log everything unless necessary for compliance or a particuliar initiative being monitored. Make Exceptions very visible (but exit the program gracefully if the exception is not "handleable") and integrate tests to prevent the same (preventable) issues from recurring. Instead use try/finally and let exceptions bubble up to the surface application code.

Exceptions that are allowed to be handled and logged hide problems in the code that need immediate attention.

Use solid CI/CD and automated testing that tests for easily definable application behaviors.

Prevent Excess Coupling

Favor atomic initialization: Initialize everything all at once versus incremental composition.

Discourage unnecessary extensibility points and instead expose what needs to be exposed with as little information sharing as possible.

Strive for immutable members wherever possible.

Cohesive, self-evident abstractions are of utmost importance.

SUMMARY

The concepts so succinctly covered in Timeless Laws of Software Development apply to all types of code (imperative or declarative) and all languages.

While I've read many great software books and all have helped me become a better developer, no text has struck me as so plainly obvious and concise at explaining good software design concepts in such an easy-to-grok manner.

For a worthwhile read, check out Timeless Laws of Software Development by Jerry Fitzpatrick

.NET 5+: the Future of .NET

With .NET 5 (and coming in hot down the pike, .NET 6), developers can use the languages (C#, F#, VB and C++/CLI) and framework (.NET) they are familiar with, to build applications that can run on a Windows, Linux or Mac OS. While .NET Core 1 and 2 lacked several expected features and felt fairly unsupportable, .NET 5 feels more familiar and addresses most issues (this is just the networking improvements made).

There do exist more-than-slightly-subtle differences. For instance ASP.NET Core Runtime 5 projects are structured differently and Global.asax.cs has been replaced with Program.cs and the accompanying Startup.cs which contain the Main entry point and provide spaces for application configuration methods that execute only when your program is initially run (this includes functions to respond to certain events like a unhandled/last chance exception handling, etc.).

public class Startup{public Startup(IConfiguration configuration){Configuration = configuration;}public IConfiguration Configuration { get; }// This method gets called by the runtime. Use this method to add services to the container.public void ConfigureServices(IServiceCollection services){services.AddDbContext<ApplicationDbContext>(options => options.UseSqlServer(Configuration.GetConnectionString("DefaultConnection")));services.AddDefaultIdentity<IdentityUser>(options => options.SignIn.RequireConfirmedAccount = true).AddEntityFrameworkStores<ApplicationDbContext>();services.AddControllersWithViews();services.AddRazorPages();services.AddMvc().AddViewLocalization(LanguageViewLocationExpanderFormat.Suffix);services.AddLocalization(options => options.ResourcesPath = "Resources");services.Configure<RequestLocalizationOptions>(options =>{options.SetDefaultCulture("en-US");options.AddSupportedCultures("en-US", "es-CO", "fr-FR");........

An example of .NET 5 ASP.NET Startup.cs which replaces most Global.asax.cs functionality; Program.cs handles all else